Originally Published: November 2018; Revised: March 2020

Best Practice Scribes

Gareth Isaac, Director and Principal Consultant, Ortecha, un DCAM Authorized Partner

Marc McQueen, EDM Council, Senior Advisor-DCAM

Executive Summary

Objectif

One of the most significant regulatory directives following the 2008 financial crisis has been the introduction of the Principles for Effective Risk Data Aggregation and Risk Reporting ou BCBS 239. The Principles outlined in this directive require banks to establish sound information infrastructures to support their risk and risk reporting functions. As part of creating the required control environment, a common practice in the financial services industry is the establishment of CDEs or Éléments de données critiques.

In spite of this focus on CDEs by the financial service industry, in the 2017 Data Management Industry Benchmark Study conducted by the EDM Council, the management of CDEs was identified as a top challenge universally across the industry. Members report that there is uncertainty regarding the exact definition of a CDE, how is it designated, or how it should be used to satisfy the control requirement.

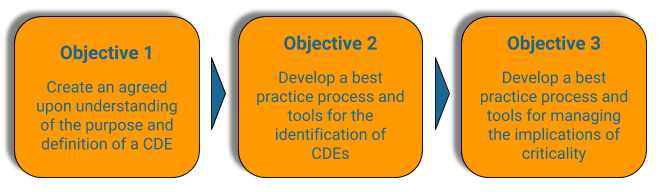

Subsequently, the EDM Council conducted 14 in-depth interviews with member firms to frame the issue. The research was organized to gain insight into how organizations define critical data, the processus to identify critical data, and the implications for heightened levels of control on critical data. The research revealed both the purpose and approach to CDE management remained fragmented and siloed to each organization. To address this, the EDM Council formed a CDE Best Practice workgroup. The workgroup was charged with establishing a Best Practice for the identification and management of critical data covering these three objectives.

The Best Practice provides processes, procedures, and tools for the execution of the identification of critical data all aligned and integrated with the EDM Council Data Management Capability Assessment Model (DCAM) Framework.

This document covers Objective 1 and Objective 2. Objective 3 will be included in a later publication.

Key Observations

- Purpose of a CDE is to prioritize your data based on criticality – this allows you to identify a scope of the most important data to bring into a heightened level of control and accountability

- Determining criticality is a business processus perspective based on the Consommateur de données processus – and thus is determined at the conceptual level – the actual physical level data elements aligned to the conceptual level inherit the criticality

- Organizations that attempted to use a precise calculation to identify criticality did not achieve adoption and ultimately abandoned the science for a more artful analysis which included a negotiation between the Producteur de données et Consommateur de données to agree upon prioritized data based on the criticality

- Derived data can be deemed critical; however, the implications of criticality must be applied to the atomic data that is an input to the derived value

- Données granulaires used in a derivation should be independently evaluated for criticality based on the material impact each has on the derived value

- The implication of designating criticality requires a heightened level of control; these controls include governance, métadonnées, flux de données/lineage, qualité des données, transformation, and movement controls

Issue

The concept of a Élément de données critique (CDE) originated within the Financial Service Industry. In 2013 the BCBS 239 Principles were published. Principe 1 introduced the phrase ‘identify data critical to risk data aggregation.’ This phrase is now commonly referred to as identifying CDEs even though BCBS 239 does not use the terme.

Since that time, financial organizations have struggled to execute a processus to identify CDEs. There are confusion and variation in the method and the resulting CDEs.

To further understand the issue of managing CDEs, the Best Practice Work Groupe developed a set of questions to understand the current state and establish the intended scope for the Best Practice.

- What is a CDE?

- How are CDEs identified?

- What makes a Élément de données critical?

- What are the criteria for determining criticality?

- Who determines criticality?

- What is the impact of a élément de données identified as critical?

- What is an appropriate volume of CDEs?

- Can CDEs have different levels of importance?

- Are CDEs atomic elements, or derived?

- If a CDE is a derived element, does this imply that the composite elements used to create the derived element are also CDEs?

- Is it possible to identify industry-standard CDEs, or is it an organization-specific exercise?

Industry Current State

The framing questions listed above were used to learn more about actual experiences banks had with implementing CDEs. Fourteen EDM Council member organizations were interviewed. What was learned about the current state of CDE management is summarized in the following section, the full report titled CDE Member Research Interim Report was published in November 2017 and is available to EDM Council members.

The member organizations selected for interviews were those that the EDM Council had an awareness of their efforts to manage CDEs. A high degree of engagement was validated but with significant variation across the organizations in CDE definition, volume, the processus for identifying, and, the level of data management rigor applied to manage CDEs. Even from mature efforts, there was confusion and lack of confidence in how to manage CDEs with little to no cohérence dans l'ensemble de l'organisation.

Current State Finding 1: No Consistent Definition of a CDE

One of the most significant challenges is the lack of cohérence in distinguishing a granular data attribute from a derived or calculated business measure. Many firms are using the same terminology to describe logical concepts, business objectives, calculation processes, derived elements, and physical expression. The concepts described above are all real and essential things – but they are not the same thing – and by calling them all critical data elements leads to significant confusion.

Current State Finding 2: Inconsistent Process for Designating CDEs

General agreement that the business processus defines criticality existed across the organizations. Still, there was a lack of acknowledgment of all the business processes that may be consuming the same data. Their approach did not include the concept of a data supply chain. Also, the full range of stakeholders of the data often was not included in determining criticality. As a bright spot, some organizations recognized the identification of criticality as a negotiation between the producteur de données et le consommateur de données.

Some organizations had attempted to quantify criticality by applying a matrix formula to calculate an objective criticality measurement. Without exception, this absolute measurement was abandoned for more subjective analysis. (See section: Measuring Criticality: Art or Science)

Current State Finding 3: Undefined Guidelines for Managing Criticality

The designation of a élément de données as critical means it is covered by the organizational politique and standards resulting in increased data management rigor to achieve a heightened level of control. The following were common themes across the firms involved in the initial interviews and the subsequent analysis by the Best Practice Work Groupe. However, while the issues were consistent, the execution of each theme had a high variation across the organizations.

- Definition and Meaning – the number one issue is the challenge of locking down a precise meaning and harmonizing language.

- Lineage – minimally, an understanding of flux de données is required. Still, the difficulty, cost, and inability to adequately maintain lignée des données have escalated questions as to the role and value of lignée des données across all CDEs.

- Qualité des données – difficulty in negotiating agreement across multiple stakeholders to set criteria for fit-for-purpose, quality tolerance ranges and thresholds, business rules, testing requirements, and measurements.

- Governance – managing the relationships between the producteur de données and one or more consommateur de données is the most intensive part of the governance challenge because it requires collaboration across multiple stakeholders who often do not have the framework, skills, or time from various business processus subject matter experts.

- Métadonnées – the inconsistencies within individual organizations in the execution of standards for métadonnées capture led to difficulty stitching together the different approaches to métadonnées collection to provide an entreprise view. This has become more apparent with the higher rigor of métadonnées required for CDEs.

Best Practice

Parties prenantes

La gestion des données fonction Stakeholders include:

- Executive Leadership

- Business Executives

- Producteur de données

- Consommateur de données

- Data Management Practitioners (Reference: Data Management Functional Construct)

- Directeur des données

- Data Governance Executive

- Responsable des données

- Responsable exécutif des données

- Data Architect

- Domaine de données Manager

- Business Data Steward

- Technical Data Steward

- Entreprise Processus Expert en la matière

- Dépositaire des données

The remainder of the best practice focused on the activities of the Data Management Practitioners.

Portée

The scope of this best practice is comprised of three aspects:

- Defining a Élément de données critique

- Prioritizing Data Based on Criticality

- Implications of Criticality

Description

What is a Critical Data Element?

Objectif: Create an agreed-upon understanding

of the purpose and definition of a CDE

To accurately define a CDE, it is necessary to put a CDE in the context of other things in the same neighborhood with a CDE.

The Data Neighborhood

The following is a construction that defines and creates relationships between all the things in the data neighborhood. It is a business-friendly representation of the architecture de données that presents an understanding of the components and their relationships. With an understanding of the components, a processus to identify criticality can be designed.

Structure de l'élément d'entreprise / de l'élément de données

Legend

Constructs Components

![]() Conditions commerciales

Conditions commerciales

![]() Élément commercial

Élément commercial

![]() Élément de données

Élément de données

Métadonnées d'entreprise

Métadonnées d'entreprise

Métadonnées techniques

Métadonnées techniques

Métadonnées physiques

Métadonnées physiques

Élément commercial Types

![]() Atomic

Atomic

![]() Derived

Derived

![]() Determined

Determined

Critical Designation

![]() Élément commercial essentiel (CBE)

Élément commercial essentiel (CBE)

![]() Élément de données critique (CDE)

Élément de données critique (CDE)

Le construction contains a business view and a technical view in relationship to each other. As depicted in the diagram, the players in the neighborhood include business and technical oriented resources. Separating the two views creates clearly defined accountability for the business to manage the Élément commercial and technology to manage the Élément de données.

Le business view defines the business processus requirements for the data produced by the processus. The business that owns the processus is accountable for defining the requirements for the data including the data criticality. The requirements are defined as Business Terms and Business Elements with all the appropriate métadonnées commerciales. The Best Practice workgroup determined there was sufficient difference between a Conditions commerciales et Élément commercial they warranted clear separation, and both were different than a Élément de données.

Similarly, the technical view is an interpretation of the business processus requirements for data transformed into technical data requirements. The data requirements are defined as a Élément de données with all the appropriate métadonnées techniques including the physical metadata. The use of the Élément de données terme is aligned to ISO Élément de données standard to ensure architecture cohérence with other standards.

Le Élément commercial is conceptual, and the Élément de données is the technological execution of the Élément commercial. The ISO standards body have defined a “Élément de données”, and the EDMC Data Neighborhood reflects the ISO definition and clearly distinguishes that from a “Élément commercial”.

Determining whether data is critical is from the perspective of the business processus that is consuming the data. Criticality is a business designation based on an assessment of the material impact the data has on the outcome of a business processus. It is this principe that places accountability on the business to identify critical data as part of the requirements for data, so the identification is part of the requirements for the Élément commercial. Therefore, a Élément commercial that is critical is a Élément commercial essentiel (CBE) and will also have a corresponding Élément de données critique (CDE).

This will be presented more fully in the section titled Data Producer / Data Consumer Relationship.

Validation

Two approaches were used to validate the Élément commercial / Élément de données Construction worked in real life examples and were consistent with other architectural viewpoints.

- Use Case – apply the construction to actual data that have different type and levels of complexity

- Architecture des données & Modeling – align the construction with traditional architecture de données and modeling standards

For a full review of the validation analysis please review the Best Practice: Structure de l'élément d'entreprise / de l'élément de données.

CDE Purpose – Prioritizing Data

The objective of prioritizing the data is to identify which data is critical to the business processes consuming the data and thus requires heightened levels of control to ensure the data is fit-for-purpose.

The Basel Committee on Banking Supervision’s standard titled Principles for Effective Risk Data Aggregation and Risk Reporting (more commonly referred to as BCBS 239) is often cited as requiring the identification of “Critical Data Elements” (CDEs), when actually, the language is “data that is critical”. The related BCBS 239 citings follow:

- BCBS 239: Paragraph 16 – The Principles and supervisory expectations contained in this paper apply to a bank’s risk management data. This includes data that is critical to enabling the bank to manage the risks it faces. Risk data and reports should provide management with the ability to monitor and track risks relative to the bank’s risk tolerance/appetite.

- BCBS 239: Paragraph 30 – Senior management should also identify data critical to risk data aggregation and IT infrastructure initiatives through its strategic IT planning processus.

- BCBS 239: Paragraph 43 – Supervisors expect banks to produce aggregated risk data that is complete and to measure and monitor the complétude of their risk data. Where risk data is not entirely complete, the impact should not be critical to the bank’s ability to manage its risks effectively.

BCBS 239 is targeting the bank’s Risk Management data but the concept applies to all data for all business processes, not just Risk or Finance organizations.

Prioritizing Data Based on Criticality

Objectif: Develop a best practice processus

and tools for the identification of CDEs

Aperçu

Since not all data has the same significance or impact the highest risk data needs to be addressed first. This section covers an approach using criticality to enable the organization to prioritize and bring under control its data assets. Prioritization is a key part of a successful data management program, without appropriate prioritization the program is at risk of being overwhelmed with too much data to manage sooner than the organization can deliver.

Drivers of Criticality with Materiality Overlay

To identify criticality, one must introduce the concept of matérialité which aligns with the standard notion of a risk-based approach.

Some organizations have introduced levels of matérialité with the highest level identified as Critical.

- Critical

- Important

- Significant

- Unimportant

- Not Reviewed

What makes data critical is the material impact it has on the outcome of a business processus.

- Criticality comes from the perspective of the business processus that is consuming the data (Consommateur de données)

- Determining matérialité is a negotiation between the Producteur de données et Consommateur de données

- It is common, for a Consumer to assume that all data are critical

- There are drivers associated (see next section for more details) with criticality that can guide the negotiation

- Criticality has not been successfully quantified using well defined rules, leaving it to be determined through an artful analysis rather than a hard science

Measuring Criticality: Art or Science?

Using the drivers of criticality as outlined above, a Criticality Evaluation Matrix is a valuable tool to support the decision-making processus.

Many organizations initially attempted to approach identifying criticality with a quantified calculation. This is represented in the Objective Rating row in the matrix below. This may include weighting of the relative importance of any of the criterion. This rating scale needs to be driven by the risk appetite of the organization.

However, member organizations that tried to quantitatively measure data criticality experienced poor adoption and ultimately switched the approach to use the spirit of the measurement as art versus science as represented in the Subjective Rating row of the matrix.

Essentially, the subjective approach uses the matrix as a guideline to identify potential critical data and assess the scope of the impact of the critical data (Global, Regional, Local). This is done to inform a negotiation between the Producteur de données et Consommateur de données to reach an agreement on the data that is truly critical.

| Regulatory | ||||||||

| Regulatory | Legal | Reporting Level | Reputa-tional Impact | Financial Impact | Entreprise Perfor-mance | Operational Risk | Risk Manage-ment | |

| Indicates whether the attribut de données is mandated by regulatory entities to be used and managed in a particular way. Data provided to regulators. Data is subject to audit or traceability. | Indicates whether the attribut de données requires additional safe-guarding according to Schwab’s corporate standards i.e. it is confidential, highly confidential, proprietary and/or non-public information. | Indicates the level for which the data is consumed in the organiza-tion; Board or Manage-ment Team exposure carries higher risk. | Indicates whether a attribut de données is relied upon by clients or third parties… if it is wrong, reputation and trust is placed at risk | Indicates a quantifiable financial result to poor qualité des données. | Indicates whether the attribut de données is essential for the manage-ment of the business, to help manage the firm’s ability to meet strategic or operational objectives. | Indicates whether the attribut de données helps manage operational outcomes. Operational, upstream or downstream processes would fail if data was not present or incorrect. | Indicates whether the attribut de données enables us to anticipate or manage potential risk to the business. | |

| Objective Rating (Sample Scale) | 4-Global 3-Regional 2-Local | 4-Global 3-Regional 2-Local | 4-Global 3-Regional 2-Local | 4-Global 3-Regional 2-Local | 4->$X 3->$X 2-<=$X | 4-Global 3-Regional 2-Local | 4-Global 3-Regional 2-Local | 4-Global 3-Regional 2-Local |

| Subjective Rating | High / Medium / Low | |||||||

| Diagram 3: Criticality Evaluation Matrix | ||||||||

Considerations

- Private or sensitive data are not identified as a criterion of criticality. While private or sensitive data can be designated as critical, it is due to the material impact of the data on the business processus outcome – this is different than information security or privacy concerns. Measuring the matérialité of poor quality private data is by using the criteria in the construction (e.g., Regulatory, Reporting Level, Reputational Impact, etc.). More background is available in an EDM Council Best Practice published in May 2018 titled, GDPR (General Data Protection Regulation) : Le rôle de la gestion des données.

- In practice, the harder job is identifying data that is not critical.

- An alternative to artfully measuring the drivers of criticality across all data may be first to prioritize based on the level of Consommateur de données (e.g., Entreprise level-most important, External level-second, two or more business processes-third, etc.). The data consumed at the highest level would be first for evaluating criticality.

Prioritizing Criticality

Different than the objective for prioritizing data based on criticality, the objective of prioritizing the critical data is to identify the most important data based on business objectives in ranked priority. Those ranked priorities are then used to apply a heightened level of control within the time and resource constraints of the organization. There are three primary approaches to set the scope of critical data.

- Everything: Identifying all critical data across the organization

- Scoping Strategy: Prioritizing a subset of data by prioritized Use Case

- Regulatory oriented – high-risk reports or programs (e.g. BCBS 239)

- Entreprise Processus oriented criticality (business problem oriented)

- Application oriented criticality

- Project oriented (Fix forward – remediate backward)

- Hybrid: Set a volume or percentage of data that can be critical (set a target %)

Regardless of the approach to setting the scope, if the volume of identified elements exceeds the current capacity of the organization, it will need to prioritize the sequence of the work further.

| Prioritization Approach | Pro | Con |

| Subset | The benefit of prioritizing a subset of data aligned to a priority use case is that it is quick and easy and can gain attention and support from senior management. | The risk is that you create a false impression that this is the full scope of critical data. |

| Comprehensive | The benefit of a comprehensive inventory of all critical data is that you set accurate expectations for the scope of work. | The volume of in-scope data can be overwhelming and dilute the attention and support of senior management. |

| Hybrid | Manages the expectations of the management team. | Often cannot predict the effort or timelines associated with the effort. |

Data Producer / Data Consumer Relationship

Data Supply Chain

Understanding how data exists in the Data Supply Chain is key to understanding the relationship between Data Producers and Data Consumers.

A Domaine consumes data from upstream producers, produces data and consumes that data within the domaine, and, a domaine also produces data for downstream consumers. Domaine management includes reconciling all requirements for data across the data supply chain.

Business Process Perspective

Accountability for data is with the owner of the business processus that creates the data – the Producteur de données. This adds another perspective to understanding the relationship between Producteur de données and Consumer. The Consommateur de données is responsible to ensure that the data is appropriately used and fit for purpose for their business processus.

- A business processus has requirements for data as inputs and outputs of the processus.

- The “owner” of the business processus that creates data is the Producteur de données.

- A business processus that consumes data from another domaine de données est un Consommateur de données and is responsible for defining requirements for data and holding the Producteur de données accountable.

- A Producteur de données is responsible for meeting the data requirements of the Consommateur de données. These requirements include precision of meaning, dimensions de la qualité des données, access, and authorization of use, monitoring, measuring, etc.

- A Producteur de données is also usually a Consommateur de données. Every Producteur de données consumes their data to support their processus, but often they also consume data from upstream of their operation.

- Criticality of a Élément commercial is proposed by the Consommateur de données and validated and accepted or rejected by the Producteur de données.

- Le Producteur de données must first determine that the requested Élément commercial is within the scope of their domaine de données before assessing the proposed criticality of the Élément commercial.

- The entire processus between the Consommateur de données et Producteur de données is based on the requirements for data and use of the data in the consumer’s business processus.

- The heightened level of control applied to a Élément commercial essentiel is what permits Data Producers and Data Consumers to agree to the fit-for-purpose of the data consumed.

The Negotiation

When the Consommateur de données proposes criticality, the natural inclination is to declare all data consumed as critical to their business processus. If the organization’s funding modèle places the accountability for funding solely on the Producteur de données, there is no financial consequence to the Consommateur de données for the cost of the enhanced control applied to Critical Data Elements. This results in a strain on the negotiation processus between the Producteur de données et Consommateur de données. Le Producteur de données et Consommateur de données will have to agree to operate within mutually defined resource constraints. The negotiation is further complicated when a Producteur de données is managing priorities from multiple Data Consumers at which point the Data Governance framework must provide an escalation protocol for mediating priorities that exceed resource capacity of the Producteur de données. The reality of resource constraints, even at the Entreprise level, requires the organization to define the level of resources that can be applied to achieve the implications of managing criticality.

Setting resource constraints aside, even when an assessment of Criticality Dimensions is used to inform the criticality designation there will be differences in opinion that will need to be negotiated. The Producteur de données needs to understand the actual use of data by the Consommateur de données to reach an agreement for criticality and to ensure the data are fit-for-purpose by the Consommateur de données.

As stated above, the negotiation processus is compounded because it is not one-to-one but a one-to-many negotiation (multiple consumers who may have variation in their requirements). One of the roles of the Producteur de données is to align and manage Consommateur de données requirements to develop as simple a ensemble de données as possible.

Governance of the processus of agreeing to criticality needs to include an opportunity for escalation when the Producteur de données et Consommateur de données data domains cannot reach an agreement on criticality or prioritize criticality within the resource constraints.

Considerations

- For the negotiation to be effective it must be fact-based with transparency between the Consommateur de données requirements and the Producteur de données assessment of the requirements. This transparency is even more critical when the level of subject matter expertise about the business processus and the data exists on only one side of the negotiation.

- Alignment to the organization’s funding modèle is critical.

- Alignment to the organization’s governance modèle is critical.

Données dérivées

As part of the exploration of the Data Neighborhood, there are often questions if Données dérivées could be considered critical, or even if it should be managed. This section aims to define what Données dérivées is and how that fits into criticality and broader data management.

While a derived data value can be a Élément commercial essentiel, managing criticality is at the atomic level. In the case of a derived Élément commercial essentiel, the Producteur de données should evaluate the inputs to determine if they are also critical. Each input value should be judged separately for material effect on the fit-for-purpose quality of the derived value. Do not assume that all inputs will have a material impact on the quality of the derived output and ultimately on the business processus outcome.

Furthermore, if the inputs to the derived CBE are themselves derived Business Elements then first determine the material impact of poor quality of each of the derived inputs. If it is deemed to be material, then the Élément commercial should be defined as critical, and its inputs will then need to be evaluated for material impact. This deconstruction processus must continue until all inputs are at an atomic level (or back to a point where the adequate control of the element is demonstrated), and this helps inform the Traçabilité des données effort often associated with Critical Elements. The quality of a derived Élément commercial essentiel is managed at the atomic level of its “critical” inputs.

The quality of the inputs and the exécution of the business logic of the derivation is a Data Management accountability. The actual business logic of the derivation is a business processus accountability.

When derivations create new Business Elements, they should be recorded and an accountability review performed on the new Business Elements. If the subject matter expertise for the new data lies with the Consommateur de données or the data is derived from multiple Data Producers then the accountability should shift from the original Producteur de données(s) to the producer of the new derived data. Regardless of who has accountability the principe de Point d'approvisionnement faisant autorité should be maintained so that all consumers of the Élément commercial obtain the element from a single point of provisioning.

Considerations

- How far back do you go to get to and manage the inputs?

CDE Implications

Objectif: Develop a best practice processus, procedures

and tools for managing the implications of criticality

The final objective of the Best Practice project is a work-in-process to articulate the implications of criticality as the heightened level of control requirements for the Critical Data Elements. As the work is completed a subsequent report will be published. The target areas for analysis include the following.

Gouvernance – Engaged Governance – executive owners and Business and Technical stewards in place for every CDE with collaboration among producers, consumers, IT, and operations

Définition – Precise Meaning – for all front-to-back applications, for all business processes and for all derived formulas

Métadonnées – Documentation and Métadonnées – names, definitions, aliases, business rules, provisioning points, authorized data sources, source of data, transformation processes, logical-to-physical mapping, etc.

Traçabilité des données (vs Flux de données) – End-to-End Lineage – may be required to complete data forensics required to root cause fix of poor quality data (capturing all transformations and calculations across the full business lifecycle)

Qualité des données – Fit-for-Purpose – quality measurements, quality thresholds, defect management, root cause analysis and remediation

DCAM Framework Alignment

All best practice aligns with the Capabilities and Sub-capabilities as defined in the DCAM Framework. The DCAM est le What description of the Capabilities needed for a comprehensive and successful Data Management initiative. The best practice article describes the industry-standard Comment execution of the Capabilities in an organization. For more insight, see, Using A Best Practice Article.

Capability Alignment

The capabilities required for the processus of prioritizing data based on criticality align to four key Components of the DCAM Cadre.

| Composant | Capability Requirement |

| 7.0 Environnement de contrôle des données | ● The processus de domaine de données management is in the Data Control Environment Component. ● The processus of prioritizing data based on criticality resides within the domaine de données management processus. |

| 3.0 Business & Architecture des données | ● The processus of prioritizing data based on criticality is dependent on defining requirements for data, identifying data, defining data, and profilage données. |

| 5.0 Qualité des données Gestion | ● The processus of prioritizing data based on criticality does not require the Qualité des données Management Component. Qualité des données Management is part of the overall Domaine de données Management and will be integral to the processus of managing the implications of criticality. |

| 6.0 Gouvernance des données | ● The processus of prioritizing data based on criticality leverages the Data Governance Component for approving the métadonnées and the criticality designation. |

| Diagram 6: Capability Requirement | |

Design Requirements

The Work Groupe determined the execution of these capabilities would take place in the Domaine de données Gestion processus which brings together Architecture des données, Qualité des données Management, and Data Governance activities and applies them collaboratively on a specific domaine de données.

Data Management Processes

A version of the Domaine de données Gestion processus had not been developed by the EDM Council so the Work Groupe developed a Level 2 processus design and then took that design to Level 3 for those processus steps that were required for the processus to prirootize data based on criticality.

The full presentation of the Domaine de données Gestion processus and how it supports the processus of prioritizing data based on criticality is available as a separate best practice processus design titled: Domaine de données Gestion Processus.

Data Management Tools

Within the Level 3 processus designs there are tools identified that support the execution of the processus. Sample designs of these tools are not yet available. Users of this best practice are invited to provide comment or upload examples at the end of this article.

The tools identified are as follows:

- Accord de partage de données – an agreement that sets out a common set of rules between the producteur de données et consommateur de données that establishes terms and restrictions of the use of consumed data.

- Accord de niveau de service – an agreement between a service provider and a service consumer, minimally covering quality, availability, responsibilities.

- Élément commercial Request Form – the form includes a standard set of required and optional (if known) attributes necessary to accurately communicate the request to the Producteur de données

Requirements for Data

Every business processus, including the data management processes, include requirements for data as an input and output of the processus design.

The requirements for data defined for prioritizing data based on criticality are classification data as follows:

- Élément commercial essentiel flag

- Élément de données critique flag

- Critical Prioritization flag

- Consommateur de données Name

- Accord de partage de données criteria

- Service Consumer Name

- Accord de niveau de service criteria

Appendix

About the Work Group

One of the most significant regulatory directives since the 2008 financial crisis has been the introduction of the “Principles for Effective Risk Data Aggregation and Risk Reporting” or BCBS 239. The Principles outlined in this directive require banks to establish sound information infrastructures to support their risk and risk reporting functions. As part of creating the required control environment, a common practice in the financial services industry is the establishment of CDEs or “critical data elements”.

In the 2017 Industry Benchmark Study, the management of CDEs was identified as a top challenge universally across the industry. Members report that there is uncertainty regarding the exact definition of a CDE, how is it designated, or how should it be used to satisfy the control requirement.

In August of 2017, the Council held a CDE webinar briefing for all members to propose a work effort to develop a best practice recommendation for identifying and managing CDEs. The forum was an open invitation for representatives from member organizations to join a Work Groupe. The Work Groupe was then formed and today contains approximately 60 members representing all aspects of the industry (GSIBs, SIFIs, buy-side, sell-side, geographic, consultants, vendors).

The project objective was to create an agreed upon understanding of the purpose and definition of a CDE. Then, based on that purpose and definition develop a best practice processus, procedures, and tools for the identification of CDEs and for managing the implications of criticality. The execution of the processus, procedures and tools will be aligned with the DCAM Framework and the Data Management capabilities it defines. The output of this effort will be shared with banks and regulatory bodies alike.

Work Group Members – organization affiliation as of November 2018

Arzaga, Raymund – Scotiabank

Atkin, Mike – EDMC

Bala, Sathya – Deutsche Bank

Bersie, Bret* – US Bank

Bland, Karen* – Moody’s Corporation

Brophy, Doris – Societe Generale

Deligiannis, Greg – S&P Global Ratings

Dewsbury, Jeff – DTCC

Dimitrion, Genevey – State Street

Doyle, Martin* – DQ Global

Farenci, Susan – MUFG Union Bank

Finnen, Michael – Mitsubishi UFJ Financial Groupe

Fruhstuck, Mary – BNY Mellon | Pershing

Giardin, Christopher – IBM Hybrid Cloud

Gordon, Andrew – Deutsche Bank

Hawkins, Matthew* – Goldman Sachs

Isaac, Gareth* – Ortecha

Jeffries, Denise

Keslick, Rob – BMO

Klaentschi, Kathryn

Lawson, Andrew – Brickendon

Liu, Irene – PWC

McAdams, Curtis

McQueen, Mark* – EDMC / Ortecha

Nham, Annie – Macquarie Groupe Limited

Pandya, Hiten* – HSBC Bank

Robeen, Erica – Mastercard

Rolles, Daniel – EXL Service Holdings, Inc.

Roper, Michael

Sondhi, Alok – DTCC

Tang, Alec – ADIA

Townsend, Millie – Charles Schwab

Zlat, Olga – Vanguard

* Architecture des données Subgroup Member

About the Authors

Gareth Isaac is a Partner in Ortecha, an EDM Council DCAM Authorized Partner data consultancy located in the UK and the US. He is a professional Data practitioner who works with stakeholders – both leadership and subject matter experts – to understand the complex challenges involved with improving processes and data throughout the end to end information lifecycle. Gareth has worked with multiple GSIBs over the years to help improve their data management practices, specializing in lignée des données, control frameworks and governance functions.

gareth.isaac@ortecha.com

+44 20 3239 3823

Marc McQueen is the Senior Advisor, DCAM to the EDM Council. He joined the Council in 2016 and now leads the Best Practice Program to develop Data Management industry-standard processes for executing the DCAM Framework. Mark has over 20 years with a Fortune 25 GSIB where he was the business Data Management Executive for the Wholesale Bank. In addition to Best Practice Program facilitation, he provides training and EDMC Advisory Services related to the adoption and execution of the DCAM Framework in member organizations.

Mark is a DCAM Certified Trainer, Six Sigma Black Belt Certified, and Strategic Foresight accredited – University of Houston.

Mark is a Partner in Ortecha, an EDM Council DCAM Authorized Partner data consultancy located in the UK and the US.

mmcqueen@edmcouncil.org

+1 615.308.6465

Revision History

| Date | Author | Description |

| November 2018 | Gareth Isaac, Mark McQueen | Initial Publication |

| March 2020 | Marc McQueen | Knowledge Portal Release; Broke out BE/DE Construction & Domaine de données Gestion Processus into Separate Articles |